Tag: Neural Networks

-

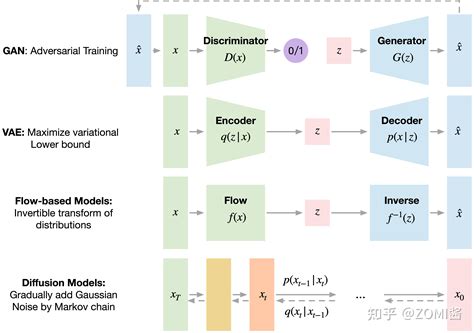

Peeking into the Neural Soul: Extracting Concepts from GPT-4

In the uncharted territory of artificial intelligence, understanding the inner workings of systems such as GPT-4 is paramount. OpenAI’s recent exploration into extracting high-level concepts from GPT-4 represents a leap towards demystifying these complex models. This method involves using sparse autoencoders to identify and interpret features within the model, thereby making the intricate processes more…

-

Cracking the Neural Code: Exploring Semantic Search and Interpretability in GPT-4

The field of artificial intelligence continues to advance at an impressive pace, with breakthroughs such as OpenAI’s GPT-4 pushing the boundaries of what neural networks can achieve. One of the more fascinating developments is the concept of high-level semantic search, a feature that could revolutionize how we interact with and understand AI models. This feature…

-

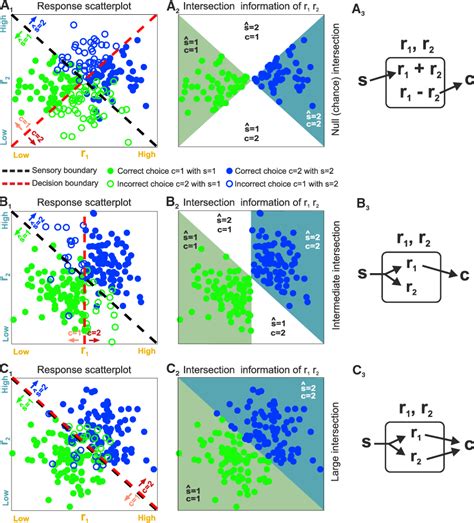

Exploring the Fascinating Intersection of Diffusion Models and Syntax Trees in Program Synthesis

The intersection of diffusion models and syntax trees is opening new frontiers in the domain of artificial intelligence and program synthesis. Researchers’ innovative use of these techniques—typically applied in different contexts like graphics and optimization algorithms—suggests a rich vein of untapped potential. By leveraging syntax trees, which help structure and understand programming languages, and integrating…

-

Deciphering the Intricacies of Language Models: Unveiling the Engineering Marvels

The realm of artificial intelligence continues to captivate us with its enigmatic capabilities, offering a peek into the intricate workings of language models. User comments on a recent study revolving around the mind of a large language model shed light on the parallels drawn between human communication and AI behavior. The subtle nuances in conveying…

-

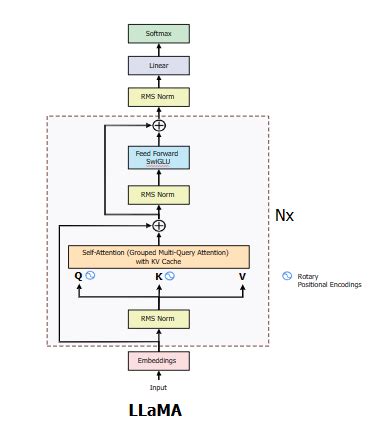

Unveiling the Secrets of Llama3: A Deep Dive into Next-Gen Neural Network Architectures

A recent GitHub repository showcasing the implementation of Llama3 from scratch has sparked a wave of intrigue and curiosity among the AI community. As enthusiasts eagerly dive into the code and documentation, discussions have emerged on the evolution of neural network (NN) architectures and the ongoing debate surrounding transformer models. One of the key takeaways…

-

The Essential Reading List for AI Enthusiasts: A Deep Dive Into Ilya Sutskever’s Recommendations

In the ever-evolving landscape of Artificial Intelligence research, the conversation sparked by Ilya Sutskever’s list of essential papers continues to intrigue the AI community. As users dissect the significance and practicality of the recommended readings, diverse perspectives emerge, shedding light on the nuances of mastering AI knowledge. Amidst the discussions, the debate on prerequisites surfaces,…

-

Falcon 2 Outperforms Meta’s Llama 3: A Closer Look at AI Models

In the realm of AI models, discussions surrounding performance comparisons and licensing intricacies play a crucial role in shaping the landscape. The recent release of Falcon 2 by UAE’s Technology Innovation Institute sparked debates, especially in comparison to Meta’s Llama 3. User comments highlighted key points, showcasing a nuanced view of the AI model ecosystem.…

-

The Alchemy of AI: Blending Models for Enhanced Capabilities

AI and deep learning are increasingly being likened to an arcane practice—where knowledge, mystery, and a dash of unpredictability blend to create results that are surprisingly effective, yet difficult to comprehend fully. This AI ‘alchemy’ involves merging various models to produce new capabilities in neural networks in a process that is not only technically profound…