Tag: AI Safety

-

Assessing AI Safety: Why It Matters and the Looming Risks

The onset of AI technology, and particularly the concept of superintelligence, has become a compelling topic of discussion over the past decade. An enriching yet contentious dialogue has emerged around the book “Superintelligence: Paths, Dangers, Strategies” by Nick Bostrom, and the general consensus remains divided but intensely scrutinized. As we stand ten years after the…

-

Reflecting on AI Safety: 10 Years After ‘Superintelligence’

It has been ten years since Nick Bostrom’s ‘Superintelligence: Paths, Dangers, Strategies’ was published, a work that undoubtedly sparked intense discourse and debate about the future implications of Artificial Intelligence (AI). The book’s central thesis—that superintelligent AI could pose existential risks—remains a significant concern for experts and laypeople alike. This reflection on AI safety, through…

-

AI and Consciousness: Navigating the Debate on Lifelike Digital Minds

The quest to model human consciousness in artificial intelligence (AI) systems has been a topic of fascination and intense debate for decades. With the evolution of AI, particularly in the realm of language learning models (LLMs), the potential to create digital beings that closely replicate human cognition seems increasingly within reach. Yet, this ambition brings…

-

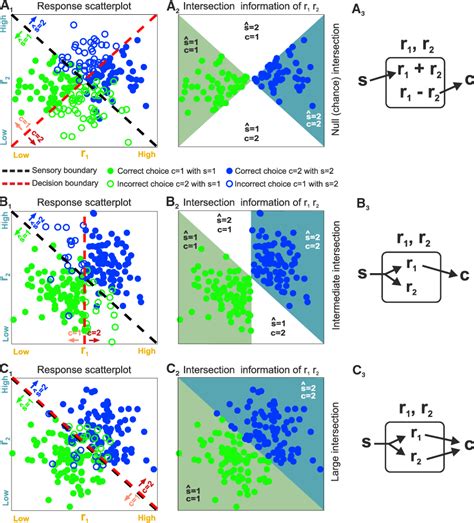

Peeking into the Neural Soul: Extracting Concepts from GPT-4

In the uncharted territory of artificial intelligence, understanding the inner workings of systems such as GPT-4 is paramount. OpenAI’s recent exploration into extracting high-level concepts from GPT-4 represents a leap towards demystifying these complex models. This method involves using sparse autoencoders to identify and interpret features within the model, thereby making the intricate processes more…

-

Cracking the Neural Code: Exploring Semantic Search and Interpretability in GPT-4

The field of artificial intelligence continues to advance at an impressive pace, with breakthroughs such as OpenAI’s GPT-4 pushing the boundaries of what neural networks can achieve. One of the more fascinating developments is the concept of high-level semantic search, a feature that could revolutionize how we interact with and understand AI models. This feature…

-

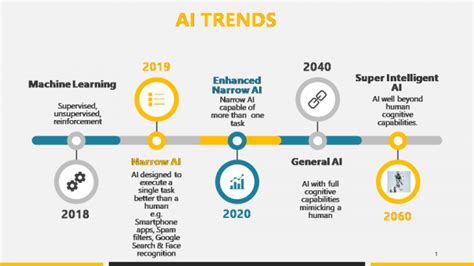

The Evolution of AI: Moving Beyond Internet Training

The landscape of AI training is undergoing a significant transformation, moving beyond the traditional reliance on internet data. Historically, LLMs have been trained on vast datasets scraped from the web, contributing to the impressive leap in capabilities we see today. However, this methodology is facing intense scrutiny and evolving discussions within both expert and public…

-

OpenAI Controversy: Ethical Oversight or a Play of Power?

In tech circles, the narrative surrounding recent events at OpenAI has been nothing short of a dramatic tale filled with intrigue, power plays, and ethical dilemmas. At the heart of this swirling controversy is Sam Altman, the CEO of OpenAI, whose brief ousting and subsequent reinstatement have prompted many to reflect on the role of…

-

Sam Altman’s OpenAI Drama: Manipulation, Loyalty, and Financial Interests

In November 2023, the tech world was shaken by the unexpected firing of Sam Altman, the CEO of OpenAI. What followed was a whirlwind of events that laid bare the inner workings of one of the most intriguing tech companies of our time. While on the surface, this might have seemed just another CEO ousting,…

-

Unveiling the Complexities of OpenAI Model Spec: Perspectives, Challenges, and Ethical Dilemmas

OpenAI’s Model Spec has sparked a wave of commentary, shedding light on the multi-faceted realm of AI ethics and transparency. The user comments reflect diverse viewpoints, from the potential applications of AI in regulating content to the implications of AI behaviors in different societal contexts. It’s fascinating to witness the convergence of opinions on whether…

-

The Hidden Costs of SB-1047 on Open Source AI Development

Recent legislative developments in California, particularly with the introduction of Senate Bill 1047 (SB-1047), have stirred considerable debate within the tech community. Ostensibly aimed at enhancing AI safety, the bill requires AI developers to undergo rigorous compliance and reporting processes which could profoundly affect the grassroots level innovation especially evident in open source communities. This…